AI everywhere: the ketchup-on-everything syndrome

Posted on March 23, 2026 • 19 minutes • 3879 words

Table of contents

- What is AI-washing and why it smells off

- Influencers, linkedinfluencers and the AI slop diet

- When putting “AI-powered” in big letters scares away customers

- AI-washing + AI slop: the internet as an airline food tray

- Chamber of horrors: AI where it gets in the way (and really annoys)

- How to smell an unnecessary “AI feature”: the marker test

- Why this happens: incentives, FOMO and PowerPoints with too many future-tense verbs

- And so, is anyone using AI well?

- What we could do instead of slapping “with AI” on everything that moves

- Less “AI-powered”, more “this solves X for you”

- Quick glossary

- Sources and references

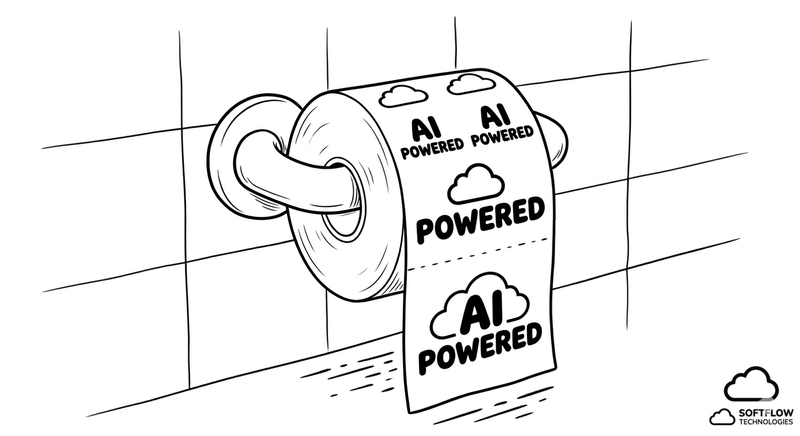

Every so often, the tech industry latches onto a word and repeats it until it’s completely hollow. First it was “cloud”, then “blockchain”, then “web3”. Now the magic word is “AI”. If you don’t put “AI-powered ” on your product page, it looks like your product comes in black and white with a Nokia 3310 thrown in for free (for my younger readers: those were the indestructible phones that only made calls and lasted a week on a single charge).

The result is that you don’t even have to look anymore: there’s AI in everything.

Literally: fridges that “predict” when to buy milk, “smart” toasters, note-taking apps that “summarize your own thoughts” (which takes some serious faith in the user), text editors that write things for you that you didn’t want to write in the first place. The problem isn’t the technology; it’s what we’re doing with it: AI-washing , which basically means slapping a coat of paint on any shoddy automation and pretending there’s a machine learning PhD trapped inside.

Pull up a chair, because the show is long: we’ll look at exactly what AI-washing is, why it’s more dangerous than it seems, I’ll take you through a little chamber of horrors of “AI features” that make life worse, and how an entire industry of AI slop has built up around them — and, like a tiny ray of light in the darkness, I’ll give you some ideas on what we could actually be doing right if we’d only lift our eyes from the PowerPoint for a moment.

What is AI-washing and why it smells off

This term isn’t something Twitter (rest in peace) made up: AI-washing is the tech cousin of greenwashing. Just like there was a time when everything was “eco”, “green” and “sustainable” even if the only green thing was the logo, we now live in the era of compulsive “AI-powered” labeling.

The serious definition, in case anyone wants to get technical: AI-washing is exaggerating, distorting, or outright inventing the use of AI in a product or service to cash in on the hype, even when under the hood there are four if/else statements and a badly-hidden spreadsheet. It’s claiming the AI medal when what you’ve got under the hood wouldn’t pass the first YouTube tutorial.

The symptoms repeat so often they look like a consulting firm’s template: the brochure says “intelligent”, “smart”, “AI-powered” everywhere, but never explains which model, with what data, to do what. Simple automations — rules, filters, searches — are sold as “advanced machine learning systems”. Magical autonomy is promised, but in reality there’s a human behind the scenes correcting, approving, or doing the actual work in a mad rush.

We’ve gone so far overboard that even regulators have raised an eyebrow. The SEC has already fined financial companies for selling “AI-driven” strategies that weren’t using AI even remotely, exactly the kind of AI-washing we’re talking about here. And well-known consultancies have been measuring something that won’t surprise you: public trust in AI is suffering precisely because of these empty promises that don’t hold up in real use.

Influencers, linkedinfluencers and the AI slop diet

If AI-washing were only the fault of nervous marketing departments we might get through it sooner, but no: there’s a whole smoke-and-mirrors industry dedicated to amplifying it.

There’s the standard tech influencer — that person who used to explain why “everything had to be blockchain or you’d be left behind”, and who has now reinvented themselves as a prophet of generative AI. The script is simple: post a video every day, look incredibly enlightened, and drop gems like “if you’re not using AI for EVERYTHING, you’re professionally dead.” The funny thing is that half of those videos are generated with AI, and the result is exactly what’s now affectionately known as AI slop : mushy, generic, interchangeable content , like baby food but with buzzwords.

For anyone wondering: yes, AI slop now has its own Wikipedia entry, defined as that digital content made with generative AI, mass-produced, of dubious quality, and designed to scrape clicks and money from the attention economy. Merriam-Webster even named it Word of the Year for 2025 , which says quite a lot about the landscape we’re creating.

The best part is when they take offense. The moment anyone says “AI slop” in public, or suggests that maybe not everything a model generates needs to be published, along comes the motivated CEO or the flavor-of-the-month guru saying that’s “a lack of vision”, “resistance to change”, “bad energy.” Not long ago, a Microsoft executive had to take to LinkedIn to ask everyone to please stop calling AI-generated content “slop”, while defending a “high-intention” use of the technology. Result: the term “Microslop” started circulating in approximately zero seconds. If the cap fits, wear it.

When certain bigshots at large companies respond with fury to the idea that perhaps we’re flooding the internet with synthetic garbage, what comes to my mind is another classic: “where there’s smoke, there’s fire”. If you didn’t feel a little called out, you wouldn’t bother writing kilometer-long threads explaining how “this is actually the future of content”. The future, apparently, is that all text sounds like a bank brochure written by a model that didn’t get enough sleep.

And then there are the linkedinfluencers. Those who’ve discovered the perfect combo: using AI to generate posts about how important it is to use AI, garnished with emojis, falsely intimate confessions, and a takeaway of “step out of your comfort zone.” It’s AI-washing squared:

First I exaggerate what my product does with AI, then I use AI to fill my posting calendar where I brag about how innovative I am with AI.

It’s not just theory: even the business press has started warning executives that relying on AI to write their LinkedIn posts produces exactly that — executive AI slop — and that people can see right through it. LinkedIn acknowledges that a CEO’s posts get enormous visibility, and serious people are saying openly: “if you’re a leader and you’re posting slop, your team will copy you at ChatGPT speed.” That’s not digital transformation — that’s a sandwich made of bread and bread.

Reviewing this section I realized I may have gone slightly overboard with the idioms, but hey — if you can’t take the heat, get out of the kitchen.

When putting “AI-powered” in big letters scares away customers

Anyone who has suffered through a useless chatbot already knows this: when a product plasters “with AI” all over the place, the first reaction isn’t excitement — it’s distrust. And it turns out that data backs up that gut feeling.

A study published in the Journal of Hospitality Marketing & Management analyzed what happened when products like TVs, vacuum cleaners, or healthcare services were described as “AI-powered” versus versions described simply as “high-tech”. The conclusion: purchase intent dropped when “AI” was explicitly mentioned. Less desire to buy the “AI” TV than the “premium” TV.

Media outlets like Fortune and Futurism have covered these findings with headlines that sum up the general feeling quite well: “Why do I need AI in my coffee maker?” and “products labeled with AI are turning consumers away”. The authors themselves talk about anxiety: people suspect that “AI” means more data collected, more things that can go wrong, and more opaque decisions made “for our own good” that nobody asked for.

Other brand trust analyses point to the same thing: every time a product promises miraculous AI results and then fails to deliver, a little bit of user faith breaks… and the next provider that actually uses AI seriously pays that bill too. It’s like the friend who’s always late: eventually you stop believing them even when, for once, they actually have a real excuse.

And the ironic thing is that this is already showing up on the bottom line. Companies are starting to remove “AI-powered” from their brochures when they see it’s reducing confidence rather than boosting sales. It turns out that coating your product in AI has a cost: the cost of people who turn around and walk away.

AI-washing + AI slop: the internet as an airline food tray

The problem isn’t just the absurd gadgets or the “Ask our AI assistant” button on every application. The problem is that, through AI-washing and AI slop, we’re turning the internet into an airline food tray: everything tastes the same, everything comes wrapped in plastic, everything has that “this was once a real meal” quality, and nobody can complain because, technically, they were fed.

You search for something online and find articles cloned with the same structure, same tone, same sentences. Go to product reviews and they’re so suspiciously neutral they could have been written by a model with an allergy to taking sides. And the product pages themselves have copy that seems to have been through five rounds of “improve this text with AI” until it’s so polished it says nothing at all.

It’s not just perception. Linguists and technologists have written entire articles explaining where the term “slop” comes from when applied to AI and how it connects to the idea of low-quality filler , designed to pad, not to nourish. The consensus is fairly clear: it’s not that AI forces you to produce garbage — it’s that a lot of people are using it for exactly that.

And of course, when someone names that gruel, there are executives who take it as a personal attack. If your content strategy is to fire prompts at a wall and hit publish, maybe the problem isn’t the criticism — maybe it’s the diet you’re serving.

Chamber of horrors: AI where it gets in the way (and really annoys)

We could put together a traveling exhibition called “AI in places nobody asked for it.” The catalog would be glorious.

A website that used to have a clear form — “enter your booking reference, choose a date, done” — decides to replace it with an AI-powered conversational chatbot. You open it and are greeted by a little figure saying “How can I help you today?” You reply “I want to change my flight”, and it asks you three questions you could already have answered in the form, takes longer, fails more often, and you end up exactly where you started but with your stress levels through the roof. The AI hasn’t solved anything: it’s inserted a layer of friction between you and what you wanted to do.

Another phenomenon worthy of study: products that used to work reasonably well — office suites, task managers, internal tools — that wake up one morning with a shiny new button: “Ask our AI assistant.” You press it out of curiosity and discover that what used to be a simple search now goes through a clunky chat that “didn’t understand your query”; that what used to be three clicks is now an unnecessary conversation; that the assistant knows nothing that the actual system didn’t already know, but wraps it all in a veneer of supposed magic that only adds frustration.

Just browse online to find complaints along the lines of: “they’ve stuffed a useless AI assistant into my favourite app and now it takes longer to do the same thing as before.” It’s the 2026 version of those toolbars that installed themselves without asking in your browser: nobody wanted them, everybody suffered through them.

A particularly painful category: features that, on paper, sound useful — “improve this slide”, “make this photo look better”, “rewrite this text” — but in practice break your workflow. PowerPoint Designer, which generates a beautiful slide… as a flat image you can’t edit. You wanted a better design, not a poster. Now, to change a single word, you have to redo the whole process or edit the image using external tools. You traded control for fireworks.

That pattern repeats in editors that convert your text into something “perfect” but lose the link to the source, or in design tools that generate variations that don’t fit your actual design system. AI here isn’t an assistant: it’s that colleague who walks into the kitchen, rearranges everything “their way”, and then leaves without doing the dishes.

Not forgetting the theme park of absurd gadgets: cat meow translators, “AI” mirrors that comment on your skin first thing in the morning, devices that promise to “improve your mood” by analyzing your voice while you’re screaming at traffic. Some are knowing gags; others, not so much — but they all have one thing in common: they use AI as a marketing hook for things that, let’s be honest, nobody needed. Beyond the laughs, what’s worrying is that they take up mental and media space that could be going to AI use cases that actually solve serious problems.

How to smell an unnecessary “AI feature”: the marker test

There’s a very simple trick — almost playground-level — for detecting AI-washing. Call it the marker test:

Take the brochure or product page. Mentally cross out, with your imaginary marker, every instance of “AI”, “AI-powered”, “smart”, “intelligent”. If the text still describes the same thing, you don’t need AI.

The pattern repeats so often it’s easy to spot: the benefit is described in empty terms — “revolutionizes”, “transforms”, “empowers” — but it never explains what exactly it does better than before; no limits are mentioned, no need for supervision, no concrete use examples; just vague promises of magic. The cost in data, computing, and design complexity is high compared to the added value: spinning up GPUs to do something a simple filter already did perfectly well. There are no impact metrics, only nice-sounding stories: nobody can say whether the assistant has reduced support tickets, saved time, or improved anything measurable.

If you run that test and the “AI feature” still makes sense, congratulations: you might actually have something interesting on your hands. If, when you strip out the word “AI”, everything reduces to “we made a longer, more expensive form”, then what you had was probably nostalgia for building a “product of the future.”

Why this happens: incentives, FOMO and PowerPoints with too many future-tense verbs

You don’t need a conspiracy theory to explain AI-washing. You just have to look at the incentives — follow the money.

Investors want to see “AI” in the slides. Many funds literally filter opportunities by keyword, and there are founders who openly admit they re-labelled existing features as “AI-driven” to get through the door. What used to be a “rules-based recommender” is now a “next-generation AI engine.”

Marketing lives on differentiation. If your competitor says their app “uses AI to recommend things”, you don’t want to be left with a sad “advanced recommendations”, even if under the hood you’re both using similar heuristics.

Inside companies there’s a monumental FOMO. Few things are more terrifying in a committee meeting than the phrase “we’re falling behind on AI.” So “AI-something” features get shoved into the roadmap without much cost-benefit analysis, just so someone can say at the next keynote that “we’re in this too.”

The result is an ecosystem where, for many people, it’s easier to justify internally “we’ve launched our AI assistant” than to say “we decided not to add AI here because it adds nothing.” The first one looks good on the slide; the second one looks good on the bottom line… but over a longer time horizon.

And so, is anyone using AI well?

It would be unfair, and frankly a bit absurd (and even malicious), to lump everyone together. While some are busy “adding AI” to everything like it’s ketchup, others have been using AI seriously for years, without needing to add fireworks.

There are the teams that use models to genuinely reduce repetitive work: ticket classification, extracting data from documents, incident prioritization, analyzing enormous volumes of text that nobody ever has time to read. They don’t sell it as “the artificial intelligence that changes everything” — they call it “the tool that takes the tedious work off our hands.”

Others integrate generative AI into flows where it makes sense: generating the first draft of a technical report, a support response, an automated test… and then actual flesh-and-blood humans review, correct, and sign off. No releasing the model unsupervised and hoping for the best.

And then there are those doing something truly revolutionary in 2026: measuring. Comparing before and after, checking whether times, errors, and costs go down. If the thing doesn’t add value, they remove it. No drama, no press release. Many of the quieter success stories come from exactly there: from people who decided not to turn AI into a religion, but into a very powerful screwdriver.

These companies tend to talk less about AI in their campaigns, but curiously they’re the ones getting the most out of it. They use it for what it is: a tool, not a dogma. They don’t need to sell you “AI-powered” on every corner because the value is felt in the website loading faster, support responding better, and things failing less. Meanwhile the neighbor with AI is proudly showing off their smart toaster that sends push notifications when the bread is done. Very useful if you live in a castle with the kitchen 800 meters away; slightly less useful in a 70 square meter flat where the toaster is two steps away.

What we could do instead of slapping “with AI” on everything that moves

The optimistic part of all this is that AI does serve many serious purposes. You don’t need to tear down the technology to criticise the circus. In fact, many of those who complain about AI-washing are precisely the ones using AI most heavily every day.

The most repeated advice — and the most boringly sensible — is to start with a real problem, not a pretty demo: where do I have clear friction today? Repetitive tasks that eat up time? Processes where the pattern exists but is hard to encapsulate in rules, and I have plenty of data? Ticket classification, data extraction from documents, summarizing long content, personalization where there’s sufficient signal… That’s where it makes sense to evaluate AI against classic alternatives.

Be transparent about what AI does… and what it doesn’t. Explain which part of the flow relies on models, what data is used, what errors are to be expected, where humans are reviewing. Transparency, according to work on brand trust , reduces distrust and improves acceptability even when people are generally skeptical about AI.

And measure impact seriously, not just “have a new button.” If after six months of “intelligent assistant” the only thing you can say is that you recorded a very nice demo video but nobody uses it because it takes longer — you’ve built an expensive paperweight. If instead you can show that a certain process takes 30% less time, that errors have been reduced, that support handles complex cases better… then maybe you’re looking at a legitimate use case.

And finally, don’t use AI as legally combustible smoke. Selling as AI what isn’t, or promising capabilities the system doesn’t have, is starting to edge into misleading advertising and fraud territory — especially in regulated sectors. The SEC has already made it clear that AI-washing isn’t just ugly: it’s punishable.

Less “AI-powered”, more “this solves X for you”

The irony of this whole story is that AI has enormous potential… and we’re smothering it with a cloud of unnecessary nonsense and shrink-wrapped content. As long as there are toasters with opinions on your breakfast and apps that put a chatty bot where there used to be a clear button, users will keep associating “AI” with “something that complicates things” rather than “something that helps.”

The litmus test is this:

If you can’t explain in one clear sentence what real problem your AI feature solves and why it’s better than the non-AI version, you probably don’t need AI.

You’re lacking focus, honesty… and, with a bit of luck, the good sense to delete a few slides from your roadmap before someone seriously proposes adding a “conversational assistant” to the contact page.

And if you’re going to flood the internet with generated content anyway, do us a favour: review what you publish, put your voice, your judgment, and your experience into it. We’ve already got plenty of AI slop to wade through — and honestly, we’re quite full.

Quick glossary

In case any term slipped in without introducing itself, here are the credentials.

- Greenwashing: a marketing strategy of projecting an ecological or sustainable image without the product or company actually being so. AI-washing is its tech cousin.

- SEC (Securities and Exchange Commission): the financial markets regulator in the United States. Think of it as the sheriff of Wall Street.

- FOMO (Fear Of Missing Out): fear of being left behind. In the corporate world, the panic that your competitors are doing something with AI and you aren’t.

- GPUs (Graphics Processing Units): processors originally designed for graphics, now the raw muscle behind the training and running of AI models.

- Heuristics: practical rules or logical shortcuts that give reasonable results without needing a complex model. The “if the customer bought X, recommend Y” of everyday business logic.

- Prompts: the instructions or questions you give a generative AI model to produce something. The equivalent of telling a waiter what you want, except here the waiter sometimes brings you something else entirely.

- Roadmap: a medium-to-long-term product plan where features and dates are prioritized. The roadmap that everyone makes and nobody follows to the letter.

- Keynote: the main presentation of an event or conference, usually on a big stage with flashy slides. Where things are announced that then take twice as long to arrive.

Sources and references

Everything claimed in this article has someone behind it who said it first, measured it, or lived through it. Here are the culprits.

- Spotting AI-washing: How Companies Overhype Artificial Intelligence - Bernard Marr, Forbes. How to spot the AI makeup on products and services.

- AI-washing (video) - Audio-visual explanation of the AI-washing phenomenon.

- AI-washing enforcement - Thomson Reuters. SEC regulatory actions against AI-washing.

- AI-washing, brand trust & behavioural science - Emotional Logic, LinkedIn. The impact of AI-washing on brand trust.

- AI slop - Wikipedia. Definition and context of the term AI slop.

- What is AI slop? - The Conversation. Analysis of mass-generated AI content.

- Merriam-Webster’s Word of the Year for 2025 is AI’s “slop” - PBS. Slop as the 2025 word of the year.

- AI Agents, Leading with AI, AI Slop - Reddy Mallidi, LinkedIn. Microsoft executive and the slop term controversy.

- Notes on “slop” - Etymology Substack. Origin and evolution of the term slop applied to AI.

- AI slop replaces corporate spin as execs… - Robyn Sefiani, LinkedIn. How AI slop is replacing corporate spin.

- AI products are scaring away customers - Fortune. Study on how the “AI” label reduces purchase intent.

- Study: consumers turned off by products with AI - Futurism. Consumers reject products labeled with AI.

- When did “slop” come to mean bad AI generated… - Reddit r/etymology. Discussion on the origin of the term slop in the AI context.

- How to build a brand when AI controls visibility - 42DM. Guide on brand trust and transparency with AI.

- AI-washing reel - Instagram. Audio-visual content on the phenomenon.