From "Move Fast" to "Ship Crap": When Speed Eats Quality

Posted on February 15, 2026 • 8 minutes • 1604 words

Table of contents

- When “moving fast” turns into “breaking everything”

- Spectacular crashes caused by rushing

- Everyday life: products that “work”, but feel awful

- OKRs, velocity, and the illusion of progress

- Where we are now: more features, more noise, same mess

- So now what? From “move fast” to “move smart”

- Quick glossary (so nobody gets lost in the buzzwords)

- Sources and references

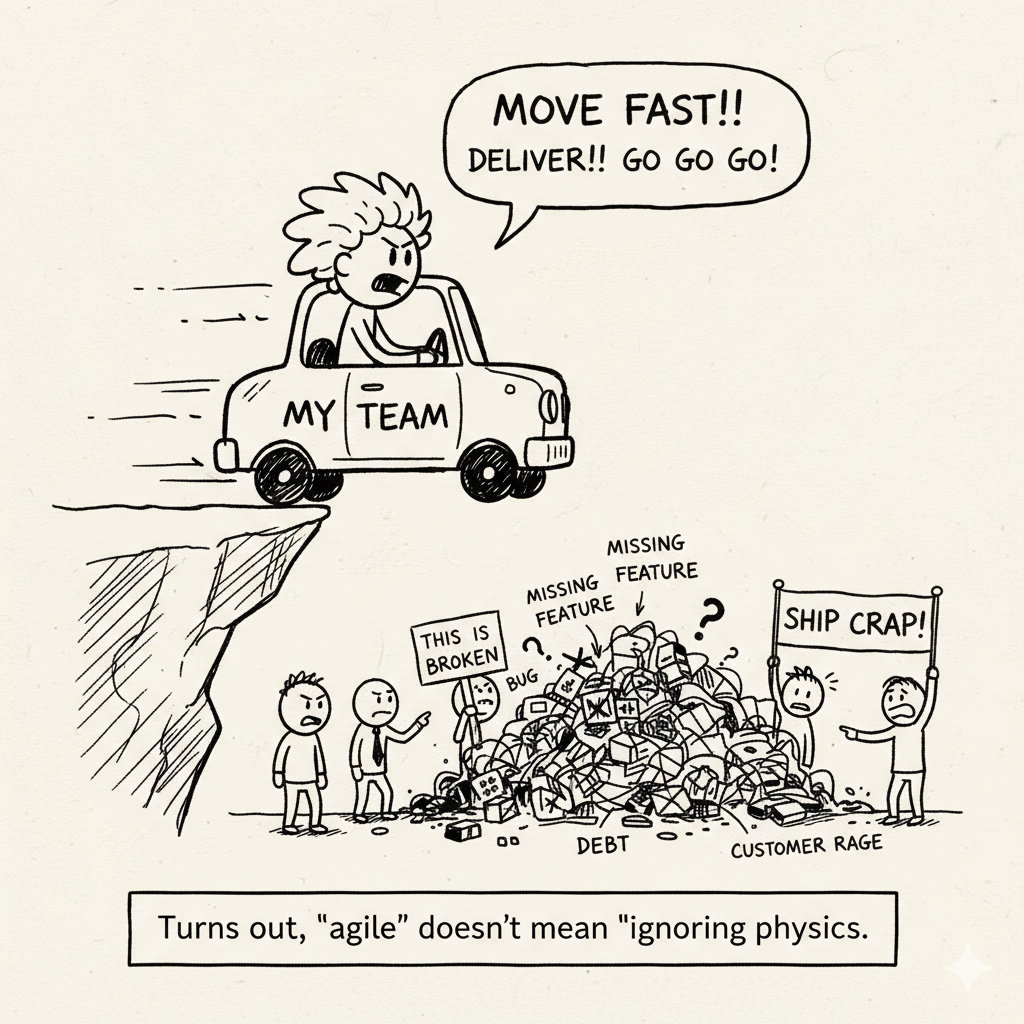

We were standing right on the edge of the cliff… and decided to take a big step forward.

For years, “move fast” has been sold to us as an unquestionable virtue. The problem is that, in far too many teams, it’s been translated into “ship crap”: ship fast, break things… and then spend months trapped in technical debt, bugs, angry customers, and general frustration.

In this article I want to show you how bad metrics (OKRs, velocity, “features per quarter”) are pushing a lot of products over the edge—and what you can do about it, because it’s not all doom and gloom.

When “moving fast” turns into “breaking everything”

The original idea was fine: continuous delivery, fast feedback, iterate with the user. The trouble started when that mantra turned into a KPI. Suddenly, the only things that seemed to matter were:

- How many features you ship per quarter.

- How many tickets you close per sprint.

- How many bright‑green OKRs you can show on the QBR slide.

Velocity, for example, is a useful metric for planning. The disaster begins when it becomes a performance target: teams inflate estimates, cherry‑pick easy high‑point tasks, and cut testing just to “hit the number”. On paper, everything gets better. In production, everything gets worse.

Stack Overflow put it nicely: when you measure engineering success purely by speed or volume of work, you push teams to cut corners and sacrifice quality, and that supposed “productivity” quickly turns into smoke.

Spectacular crashes caused by rushing

We don’t need to invent stories; there’s a whole museum of “move fast, regret later”:

- Cyberpunk 2077 – CD Projekt Red rushed the release in 2020 with unrealistic deadlines, huge promises, and platforms (especially consoles) where the game shipped full of bugs and with terrible performance. Result: pulled from the PlayStation Store for months, mass refunds, and years of work just to get it back to “acceptable”.

- Healthcare.gov – the U.S. health insurance portal launched in 2013 under massive political and deadline pressure, with a site that crashed constantly, had security issues, and delivered a terrible user experience. It basically had to be rebuilt after the initial fiasco.

- Knight Capital – in 2012, a rushed trading software update introduced a bug that triggered erroneous trades for 45 minutes. About $440 million lost, and the company effectively dead.

- Boeing 737 MAX – an extreme case, but very telling: the MCAS system, developed under pressure, poorly designed and poorly tested, contributed to two fatal crashes, 346 deaths, and enormous financial and reputational damage.

Same pattern everywhere: pressure to hit dates, management choosing “ship now” over “ship right”, and metrics that rewarded raw output over resilience.

Everyday life: products that “work”, but feel awful

You don’t need headline disasters to see the problem. Anyone using modern software is surrounded by products where the obsession with shipping more has destroyed the experience:

- Microsoft Teams – ubiquitous and useful, with an endless roadmap of features and integrations… and a well‑earned reputation for being heavy, RAM‑hungry, and sometimes clumsy.

- Users report frequent freezes, slow startup times, and memory usage north of 1 GB even for basic chat or calls.

- Recent complaints mention GPU spikes that affect other apps and a general feeling that every new wave of features just makes the app heavier without really improving the basics.

- Modern AAA games – full‑price launches that hit the market with gigantic day‑one patches, serious bugs, and poor performance on perfectly reasonable hardware. Cyberpunk 2077 is the poster child, but it’s far from the only one.

Behind the scenes, there’s a long list of enterprise projects (ERPs, citizen portals, internal systems) that failed or got stuck because quality, testing, and design were sacrificed to “make the date”: tens of millions burned on failed ERP implementations , systems never adopted, or total rewrites just a few years later.

OKRs, velocity, and the illusion of progress

OKRs and similar tools aren’t evil by design. The real issue is how they’re used:

- Badly designed OKRs

- “Ship 20 new features this quarter.”

- “Increase the number of stories closed per sprint by 30%.”

None of that says anything about quality, stability, incident reduction, or user satisfaction. So it’s not surprising when all of those get worse.

- Velocity as a KPI

- If it goes up, “we’re doing great”.

- If it goes down, “we need to push harder”.

That leads to inflated estimates, artificially split tasks, less time for refactoring and testing, and a lot more time firefighting.

Recent articles on agile metrics all point in the same direction: using velocity as a success metric is a near‑guaranteed way to erode quality and lose sight of real business impact. What actually increases isn’t productivity, it’s your ability to fool yourself.

Where we are now: more features, more noise, same mess

The current situation, in many places, looks like this:

- Technical debt – companies that have been “moving fast” for years now find themselves with systems that are hard to change, real delivery speed getting worse, and exhausted teams. There are documented cases where accumulated debt leads to catastrophic failures and massive financial impact.

- Toxic metrics – a lot of teams still measure success by output (tickets, points, releases) instead of outcomes (better user KPIs, fewer incidents, genuine business results).

- Bloated products – tools that started lean and focused have morphed into feature‑stuffed monsters that almost nobody fully uses, that eat resources, and that lost the simplicity that made them appealing in the first place.

And the punchline: many organizations are genuinely surprised when they realize that, over the medium term, this “fast” model is actually more expensive. The cost of bugs, emergency patches, outages, support, and rewrites dwarfs what it would have cost to do things properly upfront.

So now what? From “move fast” to “move smart”

The answer is not to go back to five‑year waterfall projects and 300‑page specs. It’s much simpler than that:

- Shift OKRs from “ship X features” to “improve Y user/business metric”, and make room for quality and maintenance on purpose.

- Measure more than speed : production defects, firefighting time, user satisfaction, platform stability.

- Protect time for refactors, paying down tech debt, and structural improvements as part of the plan—not as “something we’ll do if there’s time left”.

The core idea is straightforward: you can still move fast, but only if you stop rewarding “ship crap” and start rewarding real impact and sustainable quality.

Running faster towards a cliff is, technically, moving fast. It’s just not a strategy you want to be proud of.

Quick glossary (so nobody gets lost in the buzzwords)

OKR (Objectives and Key Results) A framework for setting goals (Objectives) and measurable outcomes (Key Results). It’s meant to align the organization around a few clear, measurable goals—not to generate endless feature checklists. Good OKRs focus on impact (“increase retention by 10%”), not just output (“ship 20 features”).

KPI (Key Performance Indicator) A key metric used to track whether a specific objective is being met (for example, average incident resolution time, NPS, error rate). Things go wrong when you pick weak KPIs (tickets closed, story points) that don’t reflect real value.

Velocity (team velocity) In Scrum, the sum of story points a team completes in a sprint. It’s a planning tool to forecast how much work fits in the next sprint, not a way to compare teams or rank engineers. Turning it into a target encourages inflated estimates and cutting quality corners.

Story points A relative unit of effort/complexity used by agile teams to estimate work. They’re not hours or days; they’re an internal scale. Different teams’ story points are not comparable, and treating them like hard productivity numbers nearly always backfires.

Output vs Outcome

- Output: what you produce (features shipped, tickets closed, lines of code).

- Outcome: the effect that has (more revenue, fewer errors, higher retention, better UX).

Most modern criticism of bad metrics boils down to this: we obsess over output while ignoring outcomes.

Technical debt Shortcuts and sub‑optimal design or implementation decisions that let you move faster now, but that you’ll “pay back” later as higher maintenance cost, more bugs, and harder changes. When you optimize only for short‑term speed, that debt tends to explode into nasty failures and surprise bills.

MTTR (Mean Time To Recovery) The average time it takes to restore normal service after an incident (outage, severe degradation, etc.). It’s one of the well‑known DORA metrics for high‑performing teams; focusing on “shipping fast” while ignoring MTTR is a great way to suffer.

SLO / SLA / SLI Not the main topic here, but useful context for the “quality” part:

- SLI (Service Level Indicator) – what you measure (latency, error rate, uptime).

- SLO (Service Level Objective) – your internal target (“99.9% of requests succeed each month”).

- SLA (Service Level Agreement) – what you contractually promise to customers, including what happens if you don’t meet it.

Sources and references

- Overcoming Velocity Misuse: A Multi-Tiered Approach to Agility Metrics — Big Agile

- Beyond Speed: Measuring Engineering Success by Impact, Not Velocity — Stack Overflow Blog

- Velocity vs Quality — Kroolo

- Software Quality Continues to Be Sacrificed in the Name of Speed — DevOps.com

- Software Is Not Perfect: Cases of Software Failure and Their Consequences — Bits and Pieces

- Failed Projects and What We Can Learn — Tempo

- 4 Famous Project Management Failures and What to Learn From Them — ProSymmetry

- Biggest Software Failures in History: A Chronological Journey — LinkedIn

- Microsoft Teams Issue on Windows — Ubergizmo

- State of Microsoft Teams — UC Marketing

- 10 Famous ERP Disasters, Dustups and Disappointments — CIO

- Technical Debt Examples: Software Failure Examples — SIG

- Software Quality — DX Newsletter

- DORA Metrics — LinearB