How to Make Technical Decisions Without Selling Your Soul to the Hype

Posted on March 16, 2026 • 15 minutes • 3062 words

Table of contents

- Cost: what it really costs you (not just in dollars)

- Complexity: how many things you have to understand at once

- Team: work with the people you have, not the ones you wish you had

- Lock-in: what you’re handing the keys over to

- ROI: what you’re actually getting back, beyond the architectural ego trip

- Applying the filter to this month’s hype

- A smoke detector for the hype

- Quick glossary

- Sources and references

There are technical decisions made with data, time, and a bit of judgment.

And then there are the other kind: the ones made after watching three conference talks, scrolling through two X threads, and half-reading a case study , that somehow end with phrases like “well… now that we’ve set it all up, we might as well get some use out of it, right?”

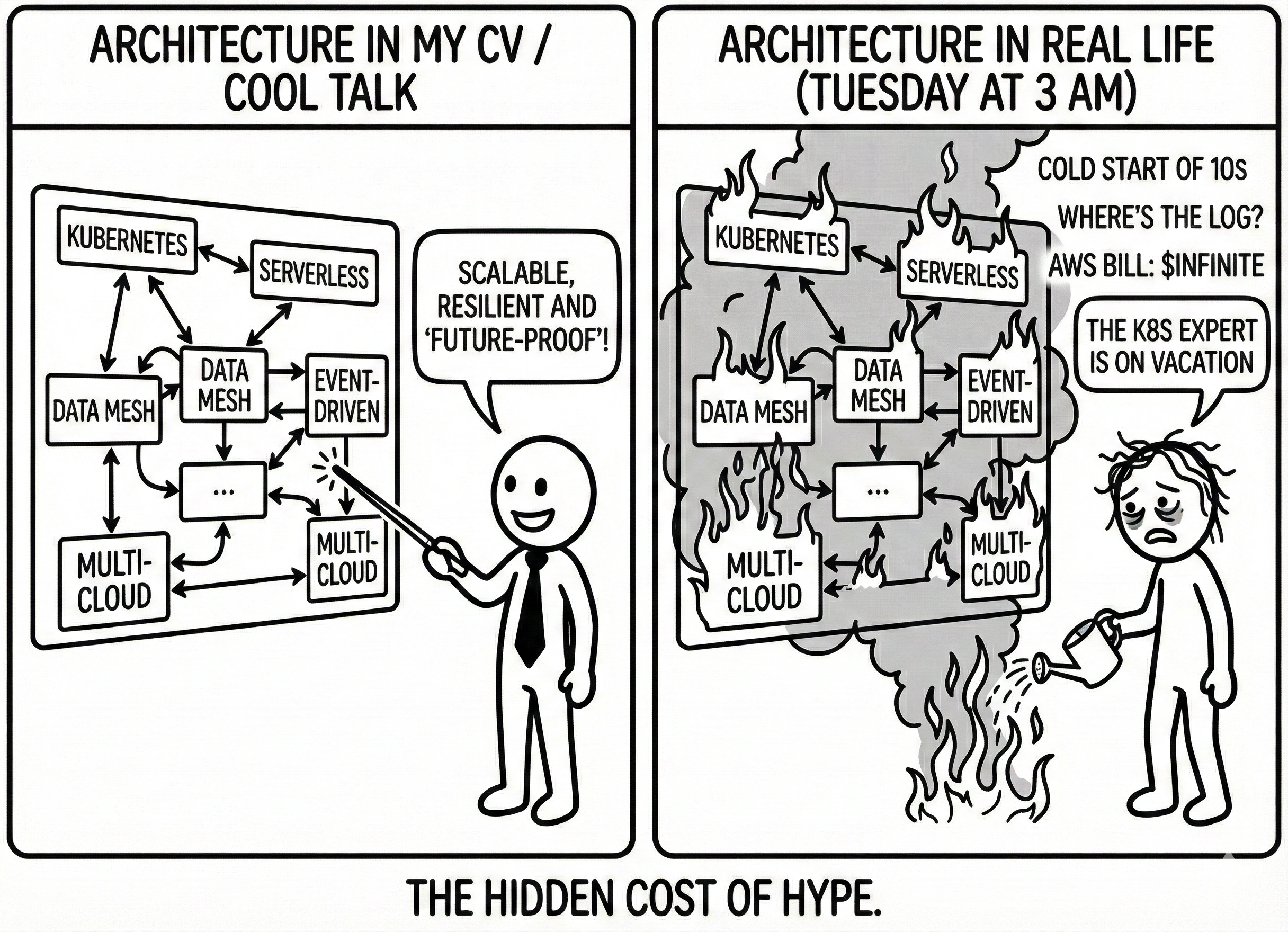

One day you’re perfectly happy with your API running on a plain old VPS, and the next you find yourself building a serverless-event-driven-data-mesh-multi-cloud architecture because you watched a video claiming “that’s how Netflix does it.”

The problem isn’t using new things; the problem is when your decisions are driven more by “it looks great on my resume” than by “it actually helps my system” (lessons from real migrations , the hidden cost of microservices ).

This article is about deciding on technology without selling your soul to the hype. About looking at seductive proposals like “move everything to serverless,” “let’s go full microservices,” “let’s build a Data Mesh” — and being able to respond with something better than “well, it depends.” To get there, we’ll cover five concrete criteria: cost, complexity, team, lock-in, and ROI, and then use them as a filter when the next hype wave rolls in.

Cost: what it really costs you (not just in dollars)

The movie usually starts with a very pretty slide. Someone shows a chart where, after migrating to serverless , the infrastructure cost line drops as if someone cut out the carbs. “You only pay for what you use,” they say, and for a few seconds you wonder why you’re still paying for that VPS like it’s 2009.

Then the experiments begin. First you move one endpoint to functions, then another, then the nightly cron job, then “while we’re at it” the report processing pipeline. And it’s true: in many cases the server bill goes down… until you start adding up all the other costs .

You realize you’ve spent weeks of expensive people’s time untangling pricing models (“per request, per GB-second, per region?”), tuning timeouts against external services, wrestling with cold starts, and restructuring code to fit into tiny functions. Meanwhile, a new category of “intangible infra ” shows up: time building new dashboards, centralizing logs from dozens of functions, tuning alerts that now fire from three different places .

The same thing happens with microservices migrations: many “7 hard-earned lessons ” case studies acknowledge that the real cost wasn’t just spinning up more instances, but the premium on operational complexity and debugging overhead that nobody had budgeted for.

So when someone sells a decision as “cheaper,” the useful question isn’t just “how much do we save on cloud bills?” It’s:

“Cool diagram. Now: how many months of engineering are we betting on this, and what else dies so it can live?”

If the answer sounds like “well, we’ll figure it out, but the diagram looks gorgeous,” there’s more hype than reality in that room.

Complexity: how many things you have to understand at once

Architectural complexity is like water damage: it spreads silently behind the walls, and by the time the stains show up, the damage is already done. Nobody wakes up and says: “I propose we increase our cognitive load until we collapse.” It happens through a series of “just one more service,” “just one more topic,” “just one more CRD.”

You start with an app on a VM. You move to containers “to stay current”: now you have images, a registry, virtual networks. Then someone proposes throwing everything into Kubernetes, because “it’s the standard”: now you have deployments, services, ingress, configmaps, operators, CRDs, and a whole new vocabulary to learn — all just to keep serving the same JSON you were serving before.

The same thing is happening with serverless: recent articles describe the “trilemma” of cost, performance, and complexity, and explain how, as solutions grow, teams end up with a tangle of functions, queues, and permissions where nobody has the full picture in their head anymore. The promise was “fewer things to manage”; the reality, in many places, is “different things to manage, but far less visible.”

The key question isn’t “can we do it?” — it’s:

“Could you walk a new hire through how this works in under ten minutes — honestly?”

Can you trace a request without jumping between five dashboards? Can you explain to anyone on the team how data flows without needing three whiteboards?

In post-mortems on failed microservices migrations , you always see the same pattern: nobody properly accounted for the true scope of the added complexity, both technical and mental.

When a technology change turns your system into something only two people understand — and only on good days — complexity has already won. The diagram might look very modern, but the day-to-day work has become considerably more brittle.

Team: work with the people you have, not the ones you wish you had

Some architectures look spectacular… in organizations of a thousand people.

Data Mesh, for example, has been marketed as the future of data : distributed domains, teams owning their data as a product, self-serve platforms. It sounds fantastic if you have several data teams, analysts, platform SREs, and a serious budget.

In smaller companies, some architects are already talking about “the dark side of the Data Mesh hype” : lots of organizational noise, poorly defined new roles, big investments in tooling… only to end up, in practice, with four broken pipelines and widespread confusion about who owns what data .

The same thing happened with microservices. The Netflix, Amazon, and Uber stories are real — but they came with years of building platform teams , investing in observability , defining bounded contexts, and spreading on-call rotations across very experienced engineers. When a five-person team tries to copy a multinational’s architecture, what it inherits isn’t the speed. It’s the burnout.

Deciding with your actual team in mind means asking questions that nobody wants to say out loud: who’s going to operate this thing once it’s in production? How much experience do we already have with distributed systems, tracing, fine-grained cloud permissions, incident response? And above all, what learning curve are we signing up for — and what are we getting in return?

What anyone who’s worked seriously with Data Mesh will tell you is exactly this: it’s not a “plug-and-play pattern,” but a heavy organizational model that only makes sense beyond a certain scale. The same goes for many “enterprise” decisions: they can be brilliant at scale and a real burden for small teams.

Accepting that the architecture has to fit the team — not the other way around — is less flashy, but much healthier. A solution your team understands and can maintain beats a cathedral that only one person knows, and only sort of.

Lock-in: what you’re handing the keys over to

Lock-in has become the go-to bogeyman for anyone using anything above bare infrastructure. “If you use Lambda, you’ll be trapped in AWS.” “If you use managed database X, you’ll never be able to leave.” “If you bet on this low-code platform, you’re toast.”

But if you read carefully the people who’ve spent years in serverless, you’ll find some interesting nuance. Some talk about the “conundrum of lock-in and spiraling complexity”: if you try to avoid lock-in completely, you end up building so many abstraction layers that you lose almost all the benefits of the managed service… and you’re still locked in, but now to your own homebrew layer. Others, from large companies , describe how they’ve mitigated lock-in by using open standards where it matters (data, contracts, formats) and accepting some dependency where the benefit is clear.

There’s also the opposite phenomenon — lock-out — which some cloud engineers warn about: customizing your systems (databases, runtimes) so heavily that, when you want to take advantage of something managed, you find you no longer fit anywhere. You’ve essentially locked yourself out of all the reasonable options.

The real question, then, isn’t “do we have lock-in?” — because the answer is always “yes, to something.” The real question is:

“What are we tying ourselves to, what’s the upside right now, and how much would it cost us to move if we ever need to?”

If using a cloud provider’s own serverless orchestrator saves you months of work and gives you resilience and scaling at low cost, it might be worth accepting that migrating it to another provider someday won’t come free. If plugging your business logic into a proprietary no-code environment gets you out of a jam for an internal use case, it might be perfect… as long as you know that rewriting it elsewhere will be the price of growing later on.

The accumulated wisdom in this area tends to point in the same direction: “get the most out of the platform, but go in with your eyes open.” Leverage what the provider does better than you (infra, baseline security, scaling), but keep your domain and your data in forms that won’t make a future migration feel impossible.

ROI: what you’re actually getting back, beyond the architectural ego trip

In many teams, there’s something simmering beneath the surface that nobody brings to the table. Two years after a major re-architecture – monolith to microservices, data warehouse to data mesh, VMs to serverless – someone looks back and wonders: “did all this effort actually deliver what it promised?”

The people who’ve taken the trouble to measure the real cost of microservices have confirmed what many suspected: in quite a few cases, the return hasn’t lived up to the investment. Teams that spent years wrestling with deployments, observability, and inter-service contracts, only to end up with a more expensive and more complicated system… that doesn’t deliver value to the user significantly faster than before.

Some companies have even decided to reverse course: consolidating overly fine-grained microservices into coarser services, or going back to a modular monolith to cut costs and cognitive load. In several case studies, that simplification saved a scandalous percentage of infrastructure spend and reduced incidents, without genuinely sacrificing the ability to scale.

The problem is that ROI rarely shows up in conference talks. We see the architecture diagram “before/after” far more easily than the “before/after” on the KPIs that actually matter: delivery time, recovery time, user satisfaction, operational cost.

So a useful way to ground any architecture decision is to frame it as: “in 6–12 months, we should notice this:” fewer incidents, more frequent deployments, shorter time to ship new features, less infrastructure spend for the same traffic.

If the only thing you can promise is “we’ll be more cloud-native, event-driven, and aligned with what’s trending,” maybe the biggest beneficiary is your resume, not your system.

Applying the filter to this month’s hype

With these five axes in mind – cost, complexity, team, lock-in, and ROI – you now have something real to work with when the next hype wave rolls in.

Someone will propose moving everything to serverless because “that’s what so-and-so bank/big tech company did.” You can point to cases where serverless brought real savings and speed, yes – but also to analyses that warn about increased complexity and cost sensitivity at scale. Instead of a gut “yes/no,” you can have a concrete conversation: our traffic patterns, our response time requirements, our team’s skills, our observability capabilities.

Or someone pitches going all-in on microservices “because we’re a modern team.” You can counter with real migration stories where complexity was massively underestimated, failure rates went up, and teams learned the hard way to move slower and more incrementally. You can ask yourselves whether you’ve truly squeezed everything out of your modular monolith first, as many engineers recommend – or whether you’re just trying to skip stages so you don’t look “behind the times.”

Data Mesh is no different: against the aspirational narrative, you have voices explaining when it isn’t the right approach – especially for small organizations or those without a mature data culture. That lets you ask whether your company looks more like the ones that got it right… or the ones that discovered its dark side.

Even with options that rarely make it onto conference slides, like no-code/low-code, it’s the same exercise: for certain internal use cases, it can deliver great short-term value; for core systems, it can become the biggest lock-in of your career. It depends on what you’re actually trying to solve.

The goal isn’t to collect articles to win arguments, but to have context: to see that behind every hype, there are success stories and failure stories, and the failures almost always have one thing in common – they ignored exactly these five factors.

A smoke detector for the hype

There’s no foolproof formula for making technical decisions; if there were, we’d already have an npm framework with that name and a couple of very expensive certifications to go with it (because money talks).

What you can have is a good smoke detector.

Every time a big architectural idea lands on the table – serverless, microservices, Data Mesh, Event Sourcing, whatever’s trending that week – fire it up and ask yourself these questions before the smoke gets in your eyes:

- What specific cost problem are we trying to solve, and how will we know we’ve solved it?

- What complexity are we adding, and who’s going to carry it day to day?

- Can our team, as it exists today, operate this without burning out?

- What vendor, pattern, or platform are we tying ourselves to, and where does that trade-off work in our favor?

- What return are we expecting in 6–12 months, beyond the satisfaction of saying “we did it”?

If you find clear answers, that hype might not be hype for you at all – it might be the right tool at the right time. If everything sounds like “because others did it” or “because it seems like the future,” maybe the most modern thing you can do is stick with something simpler, improve what you already have, and revisit the idea later.

In the end, it’s not about being cynical about every new technology (though I do love a good critique) – or becoming the grumpy elder who says everything was better back in the day (long live 8-bit!).

It’s about something harder to sell and considerably rarer: practicing reality-driven architecture.

It might not look as epic on the slides of your next conference talk. But with any luck, it’ll save you a few sleepless nights and the gut punch of looking back and finding no solid technical reason for what you built.

Quick glossary

Emergency glossary so nobody gets left out of the conversation when the hype starts flying.

- Bounded context: A logical boundary within which a data model and its language make sense. A core concept from Domain-Driven Design.

- ConfigMap: A Kubernetes resource for storing configuration (keys, environment variables) separately from application code.

- CRD: Custom Resource Definition. A Kubernetes extension that lets you define your own resource types.

- Data Mesh: An organizational data model where each domain team owns its data as a product, rather than centralizing everything in a single data team.

- Event Sourcing: An architectural pattern that stores every state change as an immutable event, rather than just saving the final state.

- Incident response: A structured process for detecting, containing, and resolving production incidents.

- Ingress: A Kubernetes resource that manages incoming HTTP/HTTPS traffic to cluster services.

- KPI: Key Performance Indicator. A metric that tells you whether something important is going well or badly.

- Lock-in: Technical dependency on a vendor or platform that makes switching to another option painful (and expensive).

- Lock-out: The opposite of lock-in: customizing your infrastructure so heavily that you exclude yourself from using managed or standard services.

- Modular monolith: An architecture where the entire application is deployed as a single unit, but the internal code is organized into well-defined, decoupled modules.

- Observability: The ability to understand the internal state of a system from its external outputs (logs, metrics, traces). It goes beyond basic monitoring: you don’t just know something is broken – you know why.

- ROI: Return on Investment. What you get back from an investment relative to what you put in.

- Tracing: Tracking the journey of a request across multiple services, used to diagnose problems in distributed systems.

- VPS: Virtual Private Server. A virtual server you rent from a provider, with root access and full control over the operating system.

Sources and references

Because arguing about architecture without references is like migrating to microservices without a reason: it sounds brave, but it rarely ends well.

- Seven Hard-Earned Lessons Learned Migrating a Monolith to Microservices - Garden.io. Practical lessons from a real monolith-to-microservices migration.

- Lessons Learned from a Monolith to Microservices - InfoQ. Real-world experiences and common pitfalls when adopting microservices from a monolith.

- The True Cost of Microservices - SoftwareSeni. An analysis of the real cost in operational complexity and debugging overhead.

- Serverless Architectures: Comparison, Pros, Cons and Case Studies - AgileEngine. A comparison of serverless architectures with pros, cons, and real-world use cases.

- The Serverless Trilemma: Cost, Performance and Complexity - Luc van Donkersgoed. The trilemma of cost, performance, and complexity in serverless.

- Is Cost a Real Concern in Serverless? - Sheen Brisals. A reflection on whether cost is truly a concern in serverless.

- The Conundrum of Serverless Lock-in, Spiralling Complexity - Serverless Advocate. The lock-in dilemma and growing complexity in serverless.

- Serverless Under Fire: Complexity, Costs, Cloud Confusion - Bran Kop. A critique of serverless from the angle of complexity, cost, and cloud confusion.

- Microservices Migration Case Study - Software Modernization Services. A microservices migration case study with real-world outcomes.

- The State of Data Mesh in 2026: From Hype to Hard-Won Maturity - ThoughtWorks. The current state of Data Mesh: from hype to maturity.

- Data Mesh: The Dark Side of the New Hype - Zoltan Horkay. The dark side of the Data Mesh hype.

- When Data Mesh Isn’t the Right Approach - Yugank Aman. When Data Mesh isn’t the right solution for your organization.

- 12-Factor Environment Automation - UDX. Environment automation following the 12-factor principles.

- DORA Metrics - LinearB. An explanation of DORA metrics for measuring engineering team performance.

- Mitigating Serverless Lock-in Fears - ThoughtWorks. How to address the fear of lock-in in serverless.

- Serverless Reduces Collaboration Cost - Capital One. How serverless reduces collaboration costs between teams.