Microservices, Monoliths, and Other Mythical Creatures

Posted on March 9, 2026 • 14 minutes • 2949 words

Table of contents

- Monoliths: the ugly friend who always shows up when you need them

- Microservices: the superhero suit that doesn’t come with instructions

- Myths that deserve retirement (with honors… and a safe distance)

- When a solid monolith is the most modern decision you can make

- So when do microservices actually start making sense?

- Choosing your architecture without turning it into a turf war

- Fewer mythical creatures, more craftsmanship

- Quick glossary

- Sources and references

Some architecture decisions are made calmly, with data, over a nice cup of coffee.

And then there’s real life, where you pick your tech stack the same way you pick a favorite sports team: because you saw it in a cool conference talk, because some big-name company uses it, or because someone tweeted that “if you don’t have 80 microservices running on Kubernetes, you’re a dinosaur.”

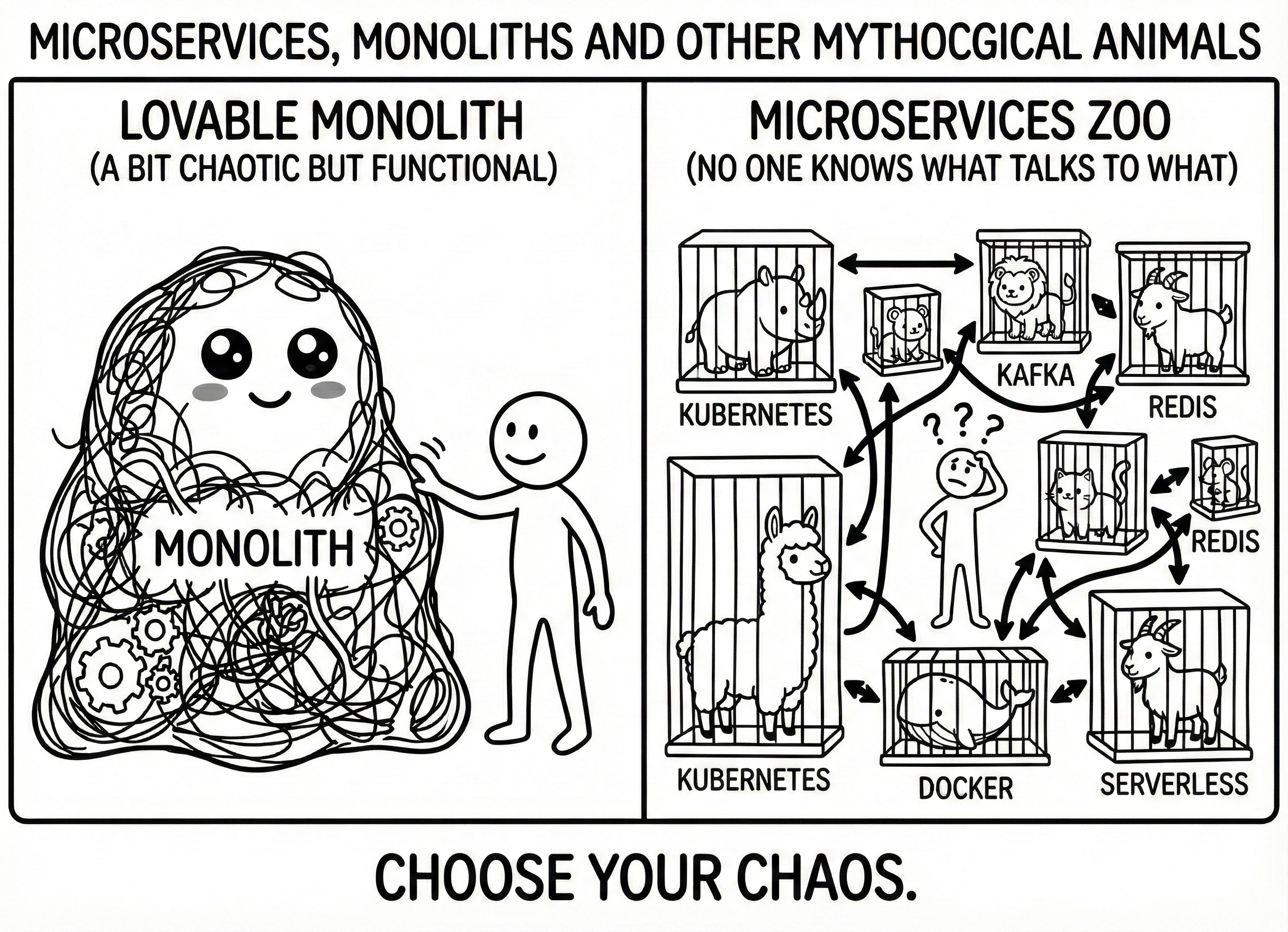

Next thing you know, you’ve gone from a lovable monolith — a little messy but functional — to a circus of services where nobody really knows what talks to what, your cloud bill is terrifying, and the only microservice running flawlessly is the one that charges you at the end of the month. All because, at some point, somebody stopped asking the only question that actually matters:

“What real problem am I actually trying to solve?”

That’s exactly what this article is about. Turning down the noise, taking a calm look at the monolith (that old friend who’s been unfairly trashed), having a laugh at microservices-as-religion, and — most importantly — walking away with a framework you can actually use when someone asks: “so, do we build this as a monolith or split it into a gazillion services?”

Monoliths: the ugly friend who always shows up when you need them

Let’s start with the usual suspect. A monolith is basically an application packaged and deployed as a single unit . It’s not glamorous, it won’t get you many conference invites, but for decades it’s been the workhorse behind millions of users doing boring but essential stuff: paying bills, buying things, booking flights — you know, the little things.

The beauty of a monolith is that a lot of things just… work. Everything lives in the same process, so internal calls are local: no flaky networks, no phantom latency, no “why does it take 400 ms to go from service A to B when they’re in the same VPC?” You fire it up on your machine and you’ve got pretty much the whole system right there, without spinning up three clusters and four SSH tunnels to debug a bug. And deployment looks more like “we’re pushing a new version” than “we’re planning Judgment Day.”

So far, so good. Where does it go wrong? The same place almost everything goes wrong in engineering: when you grow a system without design , without discipline, and without anyone raising their hand to say “maybe we shouldn’t be doing it this way anymore.”

That’s when the monolith turns into the infamous big ball of mud : any module can call any other, layers blend together, business rules live in controllers and database triggers alike, and a change in billing breaks reports, notifications, and the login screen all at once.

And here’s the uncomfortable part: that’s not the monolith’s fault. That’s chaos’s fault. If you take that same attitude and apply it to microservices, all you’re doing is spreading the ball of mud across the network , with more latency and bigger AWS bills.

That’s why many modern architects have made peace with a concept that sounds almost provocative: the modular monolith. On the outside, you’re still deploying a single application, but on the inside, you’ve decided that not everything gets to talk to everything. Domains like Orders, Users, Catalog, Payments become separate modules, each with their own rules, controlled dependencies, and a clear entry point.

It’s not a new invention, but it’s about as modern as it gets: you keep the operational advantages of a monolith (fewer moving parts, minimal latency, relatively simple deployment) and, at the same time, you ditch the “any change touches half the galaxy” feeling. And here’s the kicker: if one day you need to extract Payments into its own service because the business grew, you’ve already got half the job done

. You just move a well-defined module, not a tangled mess.

For a startup, for a young product, for a team of 3–7 people still figuring out what the heck the market actually wants, a solid modular monolith is usually the most modern choice you can make — even if it doesn’t come with a microservices sticker.

Microservices: the superhero suit that doesn’t come with instructions

In the slides, microservices look incredible: lots of small services, each doing one thing, aligned with business domains, independently deployable, scalable at will. A fever dream of autonomy and scalability.

And look: there are cases where this isn’t just hype — it’s reality. Companies like Netflix, Amazon, and Uber didn’t switch to microservices for the flex. They did it because their problems were the kind you can’t fix with a couple of indexes and some caching.

Netflix needed to stream video to millions of concurrent users, worldwide, without falling over every time a big show dropped. Moving from a monolith to a service-based architecture let them scale exactly what needed scaling, isolate failures, and experiment without blowing everything up. But they didn’t wing it: they backed the move with practices like Chaos Monkey, literally a service whose job is to break things in production so you’re forced to design for failure.

Amazon did something similar by aligning services with business capabilities: they coined the “two-pizza teams” concept (teams small enough to feed with two pizzas) and the “you build it, you run it” mantra: if you build a service, you also operate it. That only makes sense if you can deploy parts of the system without dragging everything else along.

Uber, for their part, found themselves with a global monolith where every change was a high-wire act on a planetary scale. Splitting into services let them deploy and scale by region, city, and feature. Again: massive pain first, plenty of internal horror stories, but the pressure justified the leap.

The common pattern: real pressure , brutal scaling needs, 24/7 business, massive teams, availability requirements where you can’t turn anything off without someone losing money.

And the failures? Plenty of those too. There are well-documented cases, like HeartFlow’s identity migration , where a rushed jump to microservices locked out every single user on launch day, with a rollback plan straight out of a horror movie. They had to go back to the monolith, rethink the strategy, and the next time around, do it with canaries, parallel runs, and a lot less hubris.

Other studies document less dramatic but equally painful stories: companies that, after “migrating to microservices,” ended up with more outages, more latency, and more operational complexity than before. Multiple small services, sure — but all glued to the same giant database, with no distributed tracing, improvised API versioning, and on-call rotations that turned into Russian roulette.

The takeaway stings, but it is what it is: microservices don’t fix bad architecture — they just distribute it across the network.

Myths that deserve retirement (with honors… and a safe distance)

There are certain phrases that keep coming back in every architecture debate, and when they get repeated enough times, they turn into dogma.

Here are three of my favorites.

“Microservices = modern, monolith = dinosaur”

Sounds right. It’s wrong.

There are absolutely modern monoliths: deployed in the cloud, with solid CI/CD, reasonable observability, domains neatly split into modules, integration tests that don’t give you nightmares, and a developer experience that doesn’t make anyone cry.

And there are microservices that are just a distributed monolith in disguise: every team copy-pastes the same patterns, nobody knows who owns what, they share a “temporary” central database that’s been around for five years, and any schema change is a total clown show.

Modern architecture isn’t about the number of services — it’s about properties: coupling, cohesion, how easy it is to make changes, resilience, cost. Everything else is noise.

“Microservices always scale better”

They scale differently, which isn’t the same thing.

In theory, they let you scale only what hurts: if search is struggling, you spin up more instances of that service and you’re done. In practice, performance studies

show that distributed systems introduce network overhead, new sources of latency, and failure points that simply don’t exist in a straightforward monolith.

If your problem isn’t that one specific part of the system needs 10x the scale of everything else, you might get by for years with a monolith that scales vertically and horizontally just fine — without building a whole circus of services.

“With microservices, every team moves at full speed”

If only. That only happens when you have:

- Teams that can operate what they build, not just ship code and vanish.

- Solid CI/CD, testing, logging, metrics, and distributed tracing tooling.

- Clear domain boundaries, so each service makes sense on its own and isn’t some random function exposed over HTTP.

Without that, what you actually get is teams spending their days waiting on other teams, contracts changing without warning, and bugs going on a road trip — bouncing from service to service with nobody sure which border they got stuck at.

When a solid monolith is the most modern decision you can make

There’s a pretty consistent thread across articles, guides, and retrospectives that says something unsexy but dead-on: most teams would be better off with a well-built modular monolith than with microservices.

Think about these scenarios:

You’ve got a young product, a startup or a small team. There are four of you, the business model changes every quarter, and half the stories start with “turns out users actually want…” In that world, splitting into 20 services is like renting 20 apartments when you don’t even know what city you want to live in. More keys, more doors, more headaches. Less shipping.

Your domain is fuzzy. You don’t have clear bounded contexts. Today Orders and Cart are one thing; tomorrow, maybe not. In that kind of environment, carving network contracts in stone is a recipe for pain. It’s way cheaper to make mistakes inside a monolith and reorganize modules than to version 10 APIs.

Your team doesn’t have strong distributed systems experience. Nobody’s fought with distributed tracing, network partitions, fine-grained IAM, or long-term contract versioning yet. Learning all that is great, but maybe not at the same time you’re trying to ship a product with a hard deadline.

Your scaling needs are reasonable. You’re not Netflix, you’re not Amazon, and you’re not living in a perpetual Black Friday. With a well-tuned monolith, some caching, a decent database, and basic horizontal scaling, you’re set for years.

And finally, the big one: operational cost . Every new service brings invisible baggage that doesn’t show up on the architecture diagram: more alerts, more deployments, more logs to sift through, more maintenance. A monolith with a good pipeline and automation tends to be cheaper to operate than a fleet of barely justified services.

In all these scenarios, investing your energy in solid modularization, tests that actually mean something, proper CI/CD, and halfway-decent observability usually pays off way more than fragmenting the system on day one.

So when do microservices actually start making sense?

There’s no green light that says “now’s the time.” But there are signals that keep showing up in migration stories that went reasonably well.

Wildly uneven scaling patterns start to appear. One part of the system (search, checkout, streaming, processing queues) handles way more traffic than the rest. Scaling the entire monolith just to support that one piece starts getting expensive and wasteful.

Rates of change shoot off in different directions. One business module needs weekly changes, while others need to stay practically frozen for stability or compliance reasons. Every monolith deployment turns into a political negotiation: “don’t touch my stuff, it’s under audit.”

The organization has grown. You have multiple real teams, with different leads, different goals, sometimes even different time zones. Forcing everyone to work in the same repo, with the same deployment cycle, creates more friction than value.

Your availability and isolation requirements are getting serious. A crash in a secondary module can’t be allowed to take down the customer-facing side; you need to separate risk by region, by product, by user type.

And, crucially: you’ve already squeezed the monolith dry. You’ve fixed queries, added indexes, tuned caching, parallelized where it made sense, cleaned up modules — and you still have clear bottlenecks that won’t go away without splitting things apart.

When several of these signals converge, microservices stop being an architectural vanity project and start being a viable answer. Even then, experience says: go slow, use patterns like strangler , extract peripheral stuff first, measure every step, and keep the option to roll back if things go worse than expected.

Choosing your architecture without turning it into a turf war

At the end of the day, choosing between a monolith, microservices, or some hybrid isn’t about winning arguments — it’s about knowing what you’re betting on.

Think of it as a mental cheat sheet:

- What do we already know about our domain, and what’s still foggy?

- How many people will be working on this system in a year? In three?

- Where’s the real pain right now? Scaling? Deploying? Coordinating teams? Understanding the code?

- How much can we afford to spend — in time, money, and brainpower — to operate whatever we decide today?

If you answer honestly, a lot of decisions become less mythical. Sometimes the answer is: “right now, a modular monolith with good practices will take us much further.” Other times it’ll be: “we’ve reached the point where extracting this domain into a separate service gives us room to breathe — let’s do it, but with a plan and without breaking things for sport.”

Fewer mythical creatures, more craftsmanship

Monoliths, microservices, hexagonal architecture, events, DDD… it’s easy for all of this to sound like a zoo of mythical creatures. But behind every label is something much simpler: people trying to build systems that work, that can grow, and that don’t force anyone to sleep at the office too often.

There are old monoliths quietly billing millions a year without fanfare, and sleek microservices that let companies move at an impressive clip. There are also unmaintainable monoliths and swarms of services that are basically a monument to human suffering.

The key isn’t which creature you pick — it’s whether you treat it for what it is: a tool to solve your specific problems, not a totem to worship in slide decks.

Pull that off, and your system might never end up in a Netflix case study… but with a little luck, it won’t end up in one of those “never do this, we learned the hard way” threads either.

And for a lot of teams, that’s already a win worth celebrating.

Quick glossary

In case any of these acronyms gave you the stink eye, here’s a quick rundown.

- Bounded context: a logical boundary within a system where a specific domain model makes sense and stays consistent. Outside that boundary, the same concepts may mean completely different things.

- CI/CD (Continuous Integration / Continuous Delivery): automation practices that let you integrate code changes frequently (CI) and push them to production quickly and reliably (CD).

- DDD (Domain-Driven Design): a software design approach that organizes code around business concepts rather than technology. This is where ideas like bounded contexts, aggregates, and domain events come from.

- Hexagonal architecture: a design pattern (also called ports and adapters) that separates business logic from technical details (databases, APIs, interfaces). The idea is that the core of the system doesn’t depend on anything external.

- IAM (Identity and Access Management): the system that manages who can access what within an infrastructure. In the cloud, it’s typically the service that controls user permissions, roles, and security policies.

- Strangler pattern: a gradual migration strategy where you replace parts of a legacy system with new components, like a strangler fig that grows around a tree until it takes over completely.

- Distributed tracing: an observability technique that lets you follow a request’s journey through multiple services, so you can see where time is lost or where things break.

- VPC (Virtual Private Cloud): an isolated virtual network inside a cloud provider where you place your resources. Even though everything is “in the cloud,” services within a VPC communicate as if they were on a private network.

Sources and references

The sources behind this piece, in case you want to dig in without me yapping about it.

- Monolithic to Microservices - Ideas2IT. Practical guide on when and how to migrate from monolith to microservices.

- Monolithic vs Microservices - GetDX. Detailed comparison with decision criteria and trade-offs.

- Microservices vs Monolith - Atlassian. Key differences between both approaches with context-specific recommendations.

- Microservices vs Monoliths: Lessons Learned & How to Choose - Nortal. Lessons learned from real projects and selection criteria.

- Microservices Architecture Analysis (SSRN) - Academic paper comparing both architectures.

- Why Migrate from Monolith to Microservices - Edge Delta. Motivations, risks, and signals that it’s time to migrate.

- From Monolith to Microservices: Real-World Case Studies - DEV. Real-world cases from Netflix, Amazon, and Uber with lessons learned.

- Microservices Migration Case Study - Software Modernization Services. HeartFlow case and safe migration strategies.

- Optimized Infrastructure Costs - MindK. Operational cost analysis between monoliths and distributed architectures.

- Microservices vs Monoliths: Real-World Case Studies - MoreBetter. Cases where microservices migration made things worse.

- Performance Comparison: Monolithic vs Microservices - CEUR-WS. Academic paper comparing real-world performance of both styles.

- Migration to Microservices-Based Architectures: Success Stories - Chakray. Success stories with overhead and benefit analysis.

- Migrating Monolith to Microservices - Acropolium. Step-by-step guide with signals for when to migrate.

- Monolith to Microservices Migration Strategies - CircleCI. Gradual strategies (strangler pattern, canary, etc.).

- Monolith vs Microservices: What Actually Matters - Plain English/AWS. Lessons from Netflix, Amazon, and Atlassian.

- Microservices Architecture Analysis (Master Thesis) - University of Twente. Academic analysis of microservices architectures.