Modern Architecture Fundamentals (No Snake Oil Included)

Posted on March 2, 2026 • 15 minutes • 2993 words

Table of contents

- What the heck does “modern architecture” actually mean (beyond the acronym soup)

- The classic monolith: from teenage crush to big ball of mud

- The modular monolith: when the monolith hits the gym and grows up

- Microservices: surgical tool, not official religion

- Living on events: “this happened, deal with it”

- Designing for the cloud, not just in the cloud

- Latency, state, and data: the three final bosses

- So what’s all this good for, anyway?

- Quick glossary

- Sources and references

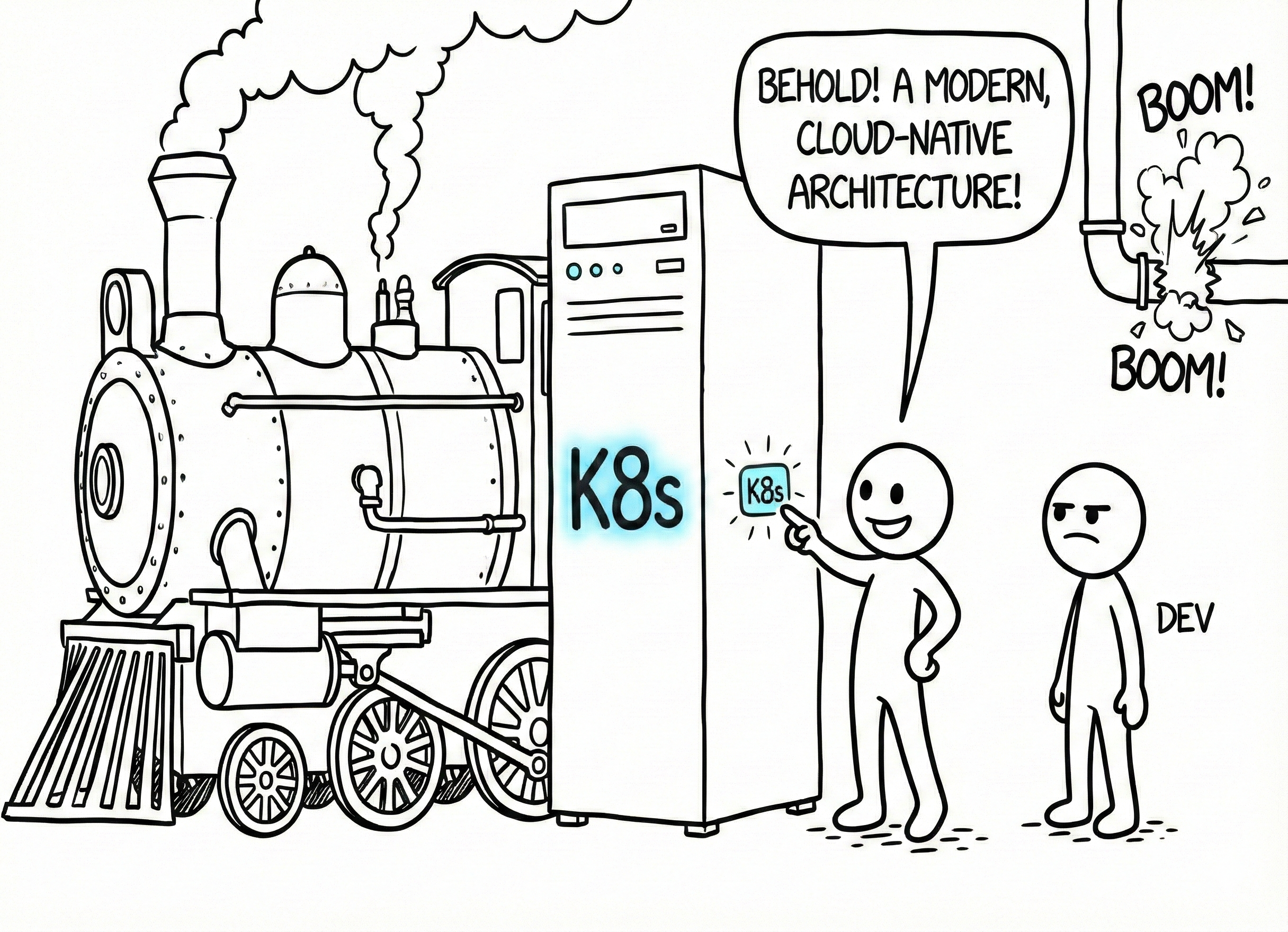

Imagine someone telling you: “I’ve set up my app on an on-premises server with Oracle 9i, but don’t worry, it’s modern architecture because it runs Docker.” That’s the moment you understand why people bail on architecture meetings pretending they have a dental emergency.

When we talk about “modern architecture,” we’re not talking about slapping Kubernetes onto everything or cramming as many buzzwords as possible into a slide deck. We’re talking about something far less flashy and far more difficult: building systems that survive in today’s ecosystem without going obsolete or blowing up every time the business changes a “simple” requirement. Systems that live in the cloud (or several clouds), communicate over networks that fail, store data scattered across half the planet, and still need to keep responding when someone decides “we also need to go multi-region because an important client said so.”

This piece isn’t a magic recipe or a consulting brochure. Think of it more as a survival guide: what “modern” actually means in architecture, what real options you have (modular monolith, microservices, events, cloud-native…), and what ideas you should tattoo on your forehead before opening your next diagram in draw.io.

What the heck does “modern architecture” actually mean (beyond the acronym soup)

First things first: let’s bust a myth. “Modern” doesn’t mean “microservices by default” or “if you’re not on Kubernetes, you’re legacy.” Above all, it means you accept the context you live in:

- You’re deploying to one or more clouds, public or hybrid, each with its own rules: instances that pop up and vanish, flaky networks, disks that don’t last forever, and bills that grow if you forget to turn something off.

- The user is no longer “right next to the server”: they’re connecting from a phone in another region, on maddening latency and sketchy Wi-Fi.

- Business requirements move fast: what’s an MVP with a simple flow today becomes integrations with three vendors, payments in five currencies, and a mandatory dark mode “because everyone else has it” tomorrow.

- Data no longer lives in a single, tidy, happy central database: now you’ve got transactional stores, caches, analytics warehouses, event queues, and – if you’re lucky – some common sense to tie it all together.

A modern architecture, in this context, doesn’t marry a single style. It might start as a perfectly respectable monolith, evolve into a modular monolith, extract a few microservices where they’re actually needed, use events where asynchrony is natural, and lean on 12-Factor principles so you don’t cry every time you deploy. The winner isn’t whoever packs the most boxes into a diagram – it’s whoever keeps the system understandable five years down the road.

The classic monolith: from teenage crush to big ball of mud

It all starts innocently enough. You have an idea, you build a monolith: routes, controllers, business logic, data access, batch scripts – all in one repo. A single artifact you push to production.

For a small team or a product in its early days, it’s nearly perfect: one pipeline, easy debugging, minimal internal latency, and that warm feeling that if you run the project on your machine, “everything’s right there.”

The problem isn’t the monolith – it’s what we tend to do to it . Time passes, deadlines tighten, and every module starts calling every other module “because hey, it’s right there.” The business layer bleeds into framework details, SQL queries show up in places you’re scared to open, and changing a tiny billing behavior means touching three layers, two modules, and praying to the patron saint of your choice.

It’s what many articles and talks lovingly(?) call the architectural big ball of mud : a system where technically “everything is connected” and practically nobody dares touch anything without first cracking open a bottle of antacid – or hydrochloric acid, in extreme cases.

The modular monolith: when the monolith hits the gym and grows up

The adult version of the monolith is the modular monolith . On the outside, you’re still deploying a single application; on the inside, you’ve decided that chaos has to start paying rent.

You begin drawing boundaries: domains like Catalog, Orders, Users, Payments stop being random folders and become modules with clear interfaces and controlled dependencies. Orders can’t just call half the world, Users doesn’t read tables that aren’t its own just because it can, and infrastructure details (framework, ORM, drivers) stay in their layer without invading business logic.

Someone might say: “but that sounds like microservices, doesn’t it?” And that’s exactly the point. The modular monolith gives you many of the benefits without the operational complexity: no network latency between modules, no need for NASA-grade distributed observability, and your deployment is still a single unit you can push and roll back with relative calm.

The key difference is that when you genuinely have a problem that justifies pulling a module out (say, Payments needs to scale very differently, or you’ve got weird legal requirements), you can extract it with controlled surgery because it already had internal boundaries. For a huge number of systems, this is more “modern” than diving headfirst into 37 microservices on day one.

Microservices: surgical tool, not official religion

At some point along the way, someone read that the big companies use microservices and decided that everyone had to do it. That story usually ends the same way: with a system that’s more complicated, slower, and more expensive – but with very cool diagrams in the internal presentations.

In its serious version, a microservices system splits capabilities into small services, each with its own lifecycle, deployment, and – ideally – its own database.

This has real advantages: you can scale only what hurts – if checkout is struggling, you scale checkout, not the entire monolith; a failure in the recommendation service shouldn’t bring down the whole purchase flow if you set the right boundaries and applied resilience patterns; different teams can work and deploy at different speeds without constantly stepping on each other, as long as the contracts between services are clear.

But the bill is real : every call between services is a network call that can fail, add latency, and require timeouts, retries, circuit breakers, and the whole catalog of patterns that sound heroic until you actually have to implement them. Data gets spread around, consistency problems pop up, and API versions need to be supported for a while. Operating the system (logs, metrics, traces, deployments, versioning) goes from “a bit messy” to “we need someone whose entire job is just this.”

Sensible modern architecture doesn’t start in “microservices to the max” mode by default. It usually begins with something more manageable (modular monolith) and only when real pain shows up (teams too large for a single deployment, wildly different scaling needs, clear organizational boundaries) does it extract parts into separate services with a concrete reason – not for sport.

Living on events: “this happened, deal with it”

There’s another twist you’ll see more and more: event-driven architecture . Here, the center of gravity isn’t direct calls – it’s things that have happened: “an order was created,” “a payment was confirmed,” “the user uploaded a document,” “the model made a prediction.”

The trick is thinking in the past tense: something has occurred.

For example, OrderCreated is a message cast out into the universe. The orders service emits it, and from there, other components subscribe and react: one sends an email, another updates a dashboard, another triggers a fraud workflow, another feeds an analytics warehouse. The emitter doesn’t know and doesn’t care who’s listening.

This has some very juicy virtues:

- Real decoupling: the emitter doesn’t need to know all the consumers.

- Painless extensibility: you can add new reactions (“now we also want to send an SMS”) without touching the original service.

- Selective scaling: if an event fires a lot, you scale the consumers that process it without touching everything else.

- Free business log: a sequence of things that happened, useful for auditing, debugging, and ML models that want to understand how the system behaves.

The fine print: reasoning about asynchronous systems is harder; you need good observability to follow an event from birth to the end of its journey. And it forces you to live with the famous eventual consistency: for a few seconds, different parts of the system can see different states of reality. A modern architecture doesn’t pretend that away – it deliberately decides where it’s tolerable (emails, metrics, recommendations) and where it isn’t (account balance, payment confirmation).

In practice, the interesting systems end up being hybrids: critical user interactions are usually synchronous (“I want to know right now if my purchase was charged”), and everything that can happen in the background travels as events (“send the receipt, update the data warehouse, recalculate the user ranking”).

Designing for the cloud, not just in the cloud

Taking your old monolith, packaging it in an AWS VM, and proclaiming it’s now cloud-native is pretty common… and pretty naive.

Designing for the cloud

means accepting a few things from the start: instances will die and respawn like it’s nothing, horizontal scaling (more small instances) is usually better than doubling the RAM on a single box, configuration changes per environment and can’t be glued to the code, and you need centralized logs and metrics because SSH-ing in to read /var/log/app.log by hand doesn’t scale anymore.

The 12-Factor App manifesto is still a kind of compass: config in the environment, explicit dependencies, stateless processes, logs treated as streams to aggregation systems, clear separation between build and run, automated and repeatable deployments, dev and prod environments that look reasonably alike. You don’t need to follow every factor like a commandment, but the more you ignore them, the more future tears you’re stockpiling.

A few simple questions that reveal whether you’re on the right track:

- What happens if you kill an instance in production? Does it rebuild itself, or does the app break?

- Can you spin up a new instance in seconds without 14 manual steps?

- Does your service store important stuff in process memory… or in external stores designed for that?

- Can you see what’s going on without logging into machines one by one?

If most of your answers are reasonable, you’re closer to modern architecture than a lot of folks with Kubernetes in their email signature.

Latency, state, and data: the three final bosses

Beyond the pretty diagrams, there are a handful of cross-cutting concerns worth getting straight: how you split responsibilities, where you draw boundaries, and how you deal with three silent enemies – latency, state, and data.

Separation of responsibilities: who does what (and who doesn’t)

Still the oldest and most trampled principle. Separating responsibilities means keeping the payments module from knowing how users are persisted elsewhere, keeping business logic free of framework details, and keeping the code that calls an external vendor from also deciding whether an order is paid.

In a modular monolith, this shows up as clear packages and modules; in a distributed system, as APIs that expose business actions, not table dumps with all-caps names.

Clear boundaries: where yours ends and mine begins

A healthy architecture has boundaries you can point to: this module belongs to Billing, that one to Catalog, this service owns “business users,” that data store is analytics-only.

Those boundaries live in contracts: message types, event schemas, endpoints, compatibility agreements. When they’re well designed, changing something inside a domain doesn’t cause a global earthquake; when they’re not, any new column is a “everyone to the war room” event.

Latency: the network always collects its toll

In distributed systems, no matter how optimized your algorithms are, what rules is the time it takes for everything to talk to everything else. Every network hop adds latency and failure points. If your API calls a service, which calls another service, which queries a database in another region… the user starts suspecting their request went on a sightseeing tour.

And then there’s physics: there are actual miles between Madrid and Virginia – no magic about it. In multi-region and global deployments , every decision about “where does this data live” directly impacts the user experience.

Modern architecture here means not designing as if everything were localhost: avoiding deep synchronous chains, using caches wisely, moving to async anything that doesn’t need an immediate response, and accepting that some things are simply going to take longer.

State and data: the sticky part of all this

State is the stuff where, if you lose it, someone screams.

In a cloud-native world, the ideal application is nearly disposable: if a process dies, another picks up the slack because the important state lives outside: databases, caches, queues, streams.

As soon as you split responsibilities across several services, the big questions arrive:

- Who owns which data?

- Do we share one giant database (spoiler: bad idea) or does each service get its own, replicating via events?

- Do we go for strong consistency (everyone always sees the same thing) at the cost of latency, or accept eventual consistency for better availability and speed?

There’s no single right answer, but there are very clear ways to get it wrong: the most common one is inventing microservices while leaving a central data monolith that everyone reads from and writes to happily. You’ve multiplied the complexity… without gaining any real autonomy.

So what’s all this good for, anyway?

The point of pulling all these fundamentals together isn’t so you can win arguments on X with strangers – it’s so you have a small mental framework to return to when you’re making concrete decisions.

If you’re debating whether to go from monolith to microservices, don’t just talk about “trends” or “infinite scalability”: go back to modular monoliths, latency, state, and teams, and ask yourself what real problem you’re trying to solve .

If you’re designing resilience stuff like timeouts, circuit breakers, or queues, fit them into your latency and events vision – not as random band-aids.

If the business shows up with multi-region and weird consistency requirements, review what data model you have and whether you can afford eventual consistency somewhere without the lawyers losing their minds.

At the end of the day, “modern architecture” isn’t about winning a contest for the prettiest diagrams. It’s about answering, with a minimum of confidence and knowledge, three questions:

- What world does my system actually run in (cloud, network, users, data)?

- What decisions will let me keep changing it five years from now without burning it all down?

- What problems am I actually solving… and which ones am I inventing for sport?

If you can pull that off, it doesn’t much matter whether the final picture has a perfectly respectable modular monolith, three well-placed microservices and a handful of events, or an entire zoo in Kubernetes: what you’ll have won’t be “the latest thing” – it’ll be something better… something that works today and that your future self won’t want to destroy tomorrow.

Quick glossary

A jargon translator so nobody gets left out of the conversation.

- CI/CD (Continuous Integration / Continuous Delivery): automation practices that let you merge code changes frequently (CI) and ship them to production quickly and reliably (CD), instead of doing manual deployments every full moon.

- Circuit breaker: a resilience pattern that “trips the circuit” when a service you’re calling starts failing, preventing your system from waiting forever and dragging the failure into a cascade.

- Cloud-native: designing an application for the cloud from the start, assuming instances are ephemeral, scaling is horizontal, and configuration lives outside the code. Not the same as stuffing a

.warinto an AWS VM and declaring victory. - Eventual consistency: a model where, after a change, different parts of the system may see slightly different data for a short time but will eventually converge to the same state. The opposite of “everyone sees the same thing instantly” (strong consistency).

- Horizontal / vertical scaling: scaling vertically means giving more power to a single machine (more CPU, more RAM); scaling horizontally means adding more small machines that share the work. The cloud favors the latter.

- ML (Machine Learning): techniques that let a system learn patterns from data instead of following hand-written rules.

- MVP (Minimum Viable Product): the smallest version of a product that lets you validate an idea with real users before spending months on features nobody may want.

- ORM (Object-Relational Mapping): a software layer that translates between the objects in your code and the tables in a relational database, so you don’t have to hand-write SQL in every corner.

Sources and references

Don’t just take some random person on the internet at their word – here are the sources so you can verify, argue, and correct me if needed.

- Architecture Comparison Tutorial - Apptastic Coder. Comparison of modern architecture styles.

- 12-Factor Cloud-Native Apps - Pradeep Loganathan. The twelve factors applied to cloud-native applications.

- Distributed Data Stores: Trade-offs in Consistency, Availability and Latency - Developers Heaven. Trade-offs between consistency, availability, and latency in distributed stores.

- Exploring Modern Architectures: Modular Monoliths & Event-Driven - Rich Mac (DEV). Modular monoliths and event-driven architecture.

- The Dark Side of Distributed Systems - ByteByteGo. The hidden cost of distributed systems.

- The Twelve-Factor App - Adam Wiggins. Methodology for building modern SaaS applications.

- Creating a Monolith After Making Microservices - r/softwarearchitecture. Thread on going back to a monolith after trying microservices.

- Modular Software Architecture - Pretius. Practical guide to modular software architecture.

- Software Architecture: Big Ball of Mud and Beyond - YouTube. Talk on architectural patterns and the big ball of mud.

- Every Time I’ve Regretted an Architectural Decision… - Milan Jovanovic (LinkedIn). Reflection on architectural decisions and their consequences.

- Monolith vs Event-Driven Architecture - Simple AWS Newsletter. Comparison between monolith and event-driven architecture.

- Multi-Region: Consistency vs Latency Trade-offs - OurGrid. Consistency vs. latency trade-offs in multi-region deployments.

- Being Cloud-Native: 12-Factor App Design - Tyk. Cloud-native design with the twelve factors.

- Modular Monolith Architecture - Milan Jovanovic. Complete guide to the modular monolith pattern.

- Evolving the Twelve-Factor App - 12factor.net. Evolution and update of the twelve-factor methodology.